Autonomous Vehicle AI Explained: The Future of Self-Driving Car Technology and Beyond

Estimated reading time: 12 minutes

Key Takeaways

- Autonomous vehicle AI uses advanced neural networks to empower cars to make driving decisions without human input.

- Core technologies include machine learning, computer vision, and sensor fusion working in concert.

- The technology scales from ADAS features to fully hands-free, Level 4 autonomy with platforms like NVIDIA’s Alpio and Mercedes-Benz MB.OS.

- Autonomous AI systems are expanding beyond cars into drones, aerospace, and defense sectors.

- Significant challenges remain around sensor reliability, data scarcity, public trust, and regulatory frameworks.

Table of contents

- 1. Understanding Autonomous Vehicle AI: Core Technologies Powering Self-Driving Cars

- 2. Autonomous AI Systems Use Cases Across Industries

- 3. How AI Powers Autonomous Technologies: Methods Driving Intelligence

- 4. Challenges in Autonomous AI: Overcoming Barriers to Safe Autonomy

- Conclusion: The Road Ahead for Autonomous Vehicle AI and Autonomous AI Systems

- Frequently Asked Questions

Autonomous vehicle AI explained refers to advanced artificial intelligence systems that empower vehicles to perceive their environment, reason about complex scenarios, and make driving decisions without any human intervention. At the core, these AI systems often rely on end-to-end neural networks—sophisticated deep learning models that process raw camera feeds and other sensor data directly into steering, acceleration, and braking controls.

Understanding autonomous vehicle AI is critical today, as the year 2026 is set to become a landmark in the commercialization of robotaxis and breakthroughs in AI platforms, such as NVIDIA’s Alpio and the Alpamayo open AI models. These advances will not only reshape the self-driving car landscape but also extend their influence to AI drones and unmanned systems, as well as the aerospace and defense sectors.

In this blog, we provide a comprehensive overview of how self-driving car AI technology functions, its applications across various autonomous systems, and the key challenges for the industry. We break down the essential AI technologies—machine learning, sensor fusion, reinforcement learning—and explore how these power next-generation autonomous systems. We also chart the expanding uses of autonomous AI systems in drone navigation, aerospace operations, and defense, supported by recent innovations and market forecasts.

Visual suggestion: For an in-depth conceptual understanding, see the NVIDIA Cosmos world model, which integrates language, vision, 3D environment mapping, and action planning in a unified AI framework.

1. Understanding Autonomous Vehicle AI: Core Technologies Powering Self-Driving Cars

At the heart of autonomous vehicle AI are several interlocking technological pillars that enable vehicles to perceive and navigate their environment with human-like understanding and precision.

Machine Learning: Deep Learning Models and Transformers

- Autonomous vehicles leverage machine learning with an emphasis on deep learning architectures like convolutional neural networks (CNNs) and transformers. For a detailed understanding of machine learning concepts and breakthroughs that propel such AI systems, see machine learning breakthroughs 2026.

- CNNs process visual data to detect and classify objects such as cars, pedestrians, traffic signs, and lane markings.

- Transformer models extend AI’s ability to reason over complex temporal sequences, supporting prediction of other road users’ behaviors.

- These models form the backbone of object recognition, scene understanding, and decision-making in the vehicle’s perception stack.

Computer Vision: Real-Time Environmental Awareness

- Computer vision enables vehicles to “see” by analyzing real-time camera images and video.

- This AI-driven vision system identifies dynamic and static elements of the environment—other vehicles, obstacles, road conditions, and signs.

- Continuous video feeds processed at high frame rates help create a spatial and semantic understanding of the vehicle’s surroundings. For insights on how generative AI enhances visual processing, refer to generative AI tools for productivity.

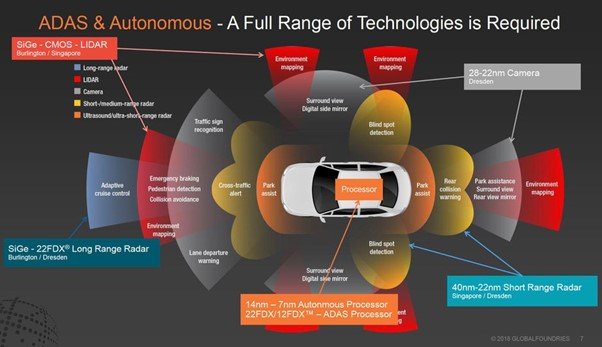

Sensor Fusion: Combining Data for a Unified Perception

- Autonomous AI fuses data from multiple sensor modalities, including:

- Cameras (visual spectrum)

- Radar (depth and velocity sensing)

- Ultrasonic sensors (close-range detection)

- This sensor fusion approach yields a high-fidelity, comprehensive environmental model far surpassing any single sensor’s limitations.

End-to-End Neural Networks and Decision Systems

- End-to-end training systems integrate raw sensor inputs—from cameras and radars—to output direct control commands like steering angles and throttle inputs.

- NVIDIA’s Alpio platform exemplifies this approach, reasoning over human-like trajectories, predicting paths, and making driving decisions based on vast synthetic and real-world datasets.

- Such platforms allow vehicles to emulate human decision-making with enhanced precision and safety.

Scalability from ADAS to Hands-Free Driving

- Autonomous vehicle AI technology scales across autonomy levels:

- ADAS (Advanced Driver Assistance Systems) at L1 and L2 provide features like adaptive cruise control and lane-keeping.

- Higher levels L3 and L4 enable eyes-off-road driving with increased automation and over-the-air (OTA) software updates.

- Industry collaborations, like Mercedes-Benz MB.OS platform (integrated with NVIDIA AI) and Geely Afari systems, showcase urban deployments of L3 and L4 autonomy.

- Market forecasts predict robotaxi software revenues hitting approximately $1 billion by 2046, reflecting the growing dominance of self-driving car AI technology.

For more detailed technical background and market insights, explore:

- NVIDIA Cosmos and neural network overview on Youtube: link

- IDTechEx autonomous driving research report: https://www.idtechex.com/en/research-report/autonomous-driving-software-and-ai-in-automotive/1111

- CES 2026 automotive AI applications: https://autovista24.autovistagroup.com/news/the-automotive-update-carmakers-accelerate-ai-applications-at-ces-2026/

2. Autonomous AI Systems Use Cases Across Industries

While self-driving cars remain the most visible application, autonomous AI has far-reaching use cases across multiple sectors, enabled by shared AI architectures like NVIDIA’s Rubin and Cosmos platforms.

AI Drones and Unmanned Systems: Navigation and Complex Missions

- Autonomous drones rely on AI for navigation, trajectory planning, and mission execution.

- Key abilities include:

- Processing telemetry data for flight path adjustments

- Predicting future trajectories using world modeling approaches, often using synthetic datasets generated from models like Cosmos.

- Synthetic datasets address the chronic shortage of real-world drone mission data, mirroring challenges faced in automotive data collection. More on synthetic data and AI research can be found at latest AI trends 2025.

- This allows AI drones to operate safely in complex environments with minimal human input.

AI in Aerospace and Defense: Autonomous Flight and Surveillance

- Aerospace applications leverage AI for autonomous flight, enhanced surveillance, and sophisticated defense maneuvers.

- Visual-language models developed by companies like Bosch and Qualcomm interpret both vehicle interiors and surrounding environments.

- AI assists in:

- Target identification and tracking

- Autonomous mission planning in contested or remote environments

- Synthetic data simulates rare or extreme defense scenarios, boosting model robustness and preparedness.

Comparative AI Use Cases by Industry Sector

| Domain | AI Role | 2026 Example Platform(s) |

|---|---|---|

| Self-Driving Cars | End-to-end reasoning & control | NVIDIA Alpio, Mercedes-Benz MB.OS |

| Drones/Unmanned | Trajectory prediction & mission | NVIDIA Cosmos synthetic data pipelines |

| Aerospace/Defense | Surveillance & target ID | Qualcomm, Bosch scalable ADAS systems |

Source references for industry use cases:

- CES 2026 autonomous driving and robotics: Youtube

- Global X 2026 AI/Autonomy insights: https://www.globalxetfs.com/articles/ces-2026-autonomous-driving-hits-an-inflection-point/

- Automotive & aerospace AI uses: https://autovista24.autovistagroup.com/news/the-automotive-update-carmakers-accelerate-ai-applications-at-ces-2026/

3. How AI Powers Autonomous Technologies: Methods Driving Intelligence

Autonomous AI systems deploy sophisticated machine learning methodologies to learn, adapt, and operate in dynamic real-world environments.

Reinforcement Learning: Teaching AI Through Feedback

- Reinforcement learning (RL) enables AI agents to learn optimal behaviors via trial and error.

- Agents receive rewards or penalties based on actions, refining policies to maximize success.

- RL is critical for systems navigating complex scenarios, such as traffic flow or drone flight paths where explicit programming is infeasible.

- Learn more about the latest reinforcement learning advances at machine learning breakthroughs 2026.

Neural Networks: Multimodal Processing and Control

- Advanced neural networks—including transformers and end-to-end architectures—are at the center of processing combined sensor inputs such as vision, radar, and telemetry.

- These networks generate real-time control outputs, enabling vehicles and drones to respond with precise actions.

- NVIDIA’s Cosmos platform incorporates models pre-trained on diverse datasets—videos, driving logs, robotic simulations—to create a universal AI understanding.

Real-World Versus Synthetic Data in Autonomous AI Training

- Training robust AI models requires vast amounts of data, often supplemented or replaced by synthetic data generated from high-fidelity simulations.

- Synthetic datasets help cover edge cases and rare events difficult to capture in real life.

- Platforms like Cosmos generate synthetic data that simulate diverse traffic, weather, and operational conditions.

Cross-Platform AI: Parallels Between Vehicles and Drones

- Autonomous vehicle AI platforms like Alpio perform pre-actuation reasoning and trajectory prediction similar to drone mission planning AI.

- Shared AI foundations enable scalability across domains despite operational differences:

- Vehicles rely heavily on detailed HD maps and OTA updates.

- Aerospace and drones address rare, long-tail scenarios across diverse geographies.

AI Model Families Accelerating Innovation

- Open AI model families such as Alpamayo provide unified representation learning that accelerates development across both vehicle and drone platforms.

- This convergence reduces development cycles and supports continuous cross-industry advancements.

- For complementary insights into AI platform comparisons and ecosystem growth, see AI platform comparison 2026.

Key sources for AI methods and innovation:

- NVIDIA Cosmos and Rubin overview: link

- IDTechEx autonomous driving AI insights: https://www.idtechex.com/en/research-report/autonomous-driving-software-and-ai-in-automotive/1111

- CES 2026 AI breakthroughs: https://www.globalxetfs.com/articles/ces-2026-autonomous-driving-hits-an-inflection-point/

4. Challenges in Autonomous AI: Overcoming Barriers to Safe Autonomy

Deploying autonomous AI systems at scale presents significant technical, ethical, and regulatory challenges demanding continued innovation.

Technical Hurdles

- Sensor accuracy and reliability: Real-world environments are unpredictable; sensors must perform flawlessly under varied conditions (rain, fog, complex urban scenes).

- Data scarcity: Critical edge cases are rare; synthetic data generation (e.g., Cosmos world models) partly addresses this issue but requires continuous refinement. For a deep dive into AI training data and regulatory challenges, visit AI training data regulation guide.

- High computational demands: Achieving reliable Level 3+ autonomy requires enormous processing power for perception, prediction, and control decisions in milliseconds.

Ethical and Regulatory Concerns

- Public trust: Trust-building is essential for adoption; users must feel confident in autonomous AI’s safety and predictability.

- Approval and accountability: Autonomous vehicles shift accountability away from drivers to manufacturers, necessitating new regulatory frameworks and type-approval standards.

- Robotaxi risks: Scaling robotaxi fleets elevates risks due to potential system errors, network latency, and cybersecurity vulnerabilities.

- Relevant AI regulation and trust insights: AI regulation updates 2025 and trust in automotive AI.

Sector-Specific Impacts

- Vehicles transitioning from L2 to L3 autonomy encounter real-world unpredictability and regulatory scrutiny.

- Drones face challenges from limited mission data and high-stakes environments demanding flawless autonomy.

- Aerospace systems contend with unique geographic complexities and rare event scenarios, requiring extensive validation.

Growth Forecasts and Industry Impact

- Robotaxi fleets are projected to grow at a compound annual growth rate (CAGR) of approximately 50%, demanding rapid AI advancement.

- ADAS markets continue expanding with increased L3 deployments.

- Trust and safety research efforts are ongoing to prepare regulatory bodies and the public for widespread adoption.

For in-depth details on challenges and future outlooks:

- Technical and regulatory challenges: https://www.idtechex.com/en/research-report/autonomous-driving-software-and-ai-in-automotive/1111

- Trust and safety insights: https://www.weforum.org/stories/2026/01/trust-automotive-ai-future-of-mobility/

- Growth analysis: https://www.globalxetfs.com/articles/ces-2026-autonomous-driving-hits-an-inflection-point/

- Robotaxi deployment milestone: http://www.avamerica.org/ai-transforms-autonomous-vehicle-deployment-as-2026-marks-industry-turning-point-for-robotaxis/

Conclusion: The Road Ahead for Autonomous Vehicle AI and Autonomous AI Systems

Autonomous vehicle AI explained reveals a tapestry of unified AI technologies—end-to-end neural networks, synthetic data production, and shared AI models—that power an array of self-driving car technology and broader autonomous systems. The breakthroughs of 2026, exemplified by NVIDIA’s Rubin and Alpio platforms, are accelerating the integration of these physical AI “factories” across sectors.

Continued innovations in self-driving car AI technology are crucial to achieving scalable, safer autonomy in road vehicles and beyond. Future advances will likely include expanding Level 4 autonomy, embedding agentic AI inside vehicles (with Qualcomm and Google leading initiatives), and enhancing public trust via transparent regulation and safety validation.

Autonomous AI systems’ use cases will deepen across drones, aerospace, and defense, fundamentally transforming transportation, logistics, and security domains.

We encourage readers to follow ongoing research, participate in industry discussions, and explore the rapidly evolving landscape where AI meets physical autonomy.

For further exploration, see:

- NVIDIA Cosmos and physical AI factories: Youtube

- CES 2026 mobility innovation: https://autovista24.autovistagroup.com/news/the-automotive-update-carmakers-accelerate-ai-applications-at-ces-2026/

- Trust-building in future mobility: https://www.weforum.org/stories/2026/01/trust-automotive-ai-future-of-mobility/

Frequently Asked Questions

- What technologies enable vehicles to drive themselves?

- Self-driving vehicles rely primarily on machine learning models including convolutional neural networks and transformers, along with sensor fusion from cameras, radar, and ultrasonic sensors, combined via end-to-end neural networks that translate perception into driving actions.

- How do autonomous AI systems in drones compare to self-driving cars?

- Both systems use deep learning and synthetic datasets for training. Drone AI focuses more on trajectory prediction and flight path adjustments in 3D space, whereas vehicles emphasize road scene understanding and control in traffic environments. Shared AI platforms like NVIDIA Cosmos enable cross-domain advances.

- What are the main challenges facing autonomous vehicle AI today?

- Challenges include sensor reliability in difficult environments, scarcity of critical edge case data, computational requirements for real-time decision-making, public trust, regulatory approval frameworks, and cybersecurity.

- How is synthetic data used in autonomous AI development?

- Synthetic data is generated through high-fidelity simulations to supplement real-world data, filling in rare or dangerous edge cases that are difficult to capture otherwise. Platforms like NVIDIA Cosmos generate synthetic traffic, weather, and mission environments for training AI models.

- What is the future outlook for autonomous vehicle AI?

- The outlook is promising with an expected growth in robotaxi fleets, expanded Level 4 autonomy, integration into aerospace and defense sectors, continuous AI platform innovations, and increased regulation and trust-building measures to enable mass adoption.