What is AI Ethics? Understanding Ethical Issues in AI Development and How to Build Ethical AI

Estimated reading time: 12 minutes

Key Takeaways

- AI ethics guides the responsible design, use, and impact of AI to align with human values like fairness, privacy, and harm prevention.

- Ignoring ethical concerns can lead to biased results, legal violations, and damaged reputation.

- Key challenges include privacy violations, bias, opacity, and lack of oversight.

- Responsible AI principles—fairness, transparency, accountability, privacy, and safety—help build trust and avoid harm.

- Developers must embed ethics early, audit for bias, engage stakeholders, and maintain oversight to build ethical AI.

Table of contents

- Introduction: What Is AI Ethics?

- Overview of Ethical Issues in AI Development

- Understanding AI Bias and Fairness Explained

- Responsible AI Principles

- AI Transparency and Accountability

- How to Build Ethical AI: Practical Steps

- Conclusion: Embracing What is AI Ethics for a Better Future

- Frequently Asked Questions

Introduction: What Is AI Ethics?

AI ethics means the moral principles and practices that shape how AI technologies are created, launched, and managed. The goal is to ensure AI aligns with core human values like fairness, privacy, and preventing harm. Simply put, it’s about making AI systems that do what’s right for people and society, not just what they can technically do.

This definition is supported by top sources such as IMD, SAP, and IBM, which emphasize that AI ethics:

- Guides AI development toward human-aligned goals

- Promotes fairness in decision-making

- Avoids harm or unjust outcomes

Ignoring AI ethics is risky. Without ethics, AI systems can:

- Produce biased or unfair outcomes

- Violate legal frameworks like GDPR (data privacy laws)

- Damage a company’s reputation

- Increase social inequalities

On the other hand, ethical AI builds trust among users, improves decisions, and supports long-term success.

AI is quickly becoming part of business operations, hiring processes, and daily life. This widespread use raises new concerns including privacy breaches, unfair discrimination, and erosion of trust. Ethical considerations are no longer optional but essential in today’s AI landscape to prevent harm and promote equity. Learn more.

Sources:

– IMD: AI Ethics

– SAP: What is AI Ethics?

– IBM: AI Ethics

– Transcend: AI Ethics

– PubMed: AI Ethics

Overview of Ethical Issues in AI Development

When we talk about ethical issues in AI development, we refer to significant challenges developers face that have real-world impacts. These ethical dilemmas directly affect users, businesses, and society.

Key ethical issues include:

- Privacy violations: Using personal data without permission or adequate protection. Read more.

- Lack of informed consent: Collecting or processing data without clear agreement from individuals.

- AI misuse: AI tools being used to discriminate or cause harm, intentionally or unintentionally.

Some common problems stem from AI algorithms that are biased, unfair, or opaque:

- Biased algorithms can treat certain groups unfairly, leading to discrimination in areas like hiring or lending.

- Opaque or “black-box” AI makes decisions without clear explanations, which undermines trust among users and regulators.

- Insufficient human oversight allows mistakes or harmful outcomes to go unchecked, increasing risks of societal inequality.

These issues highlight the need for AI transparency and accountability—principles that will be discussed in detail later. More info.

Sources:

– SAP: Ethical Issues in AI

– IBM: AI Ethics Challenges

– IMD: AI Ethics

– Transcend: Ethical AI

– DataCamp: AI Ethics Introduction

Understanding AI Bias and Fairness Explained

One of the most discussed topics in AI ethics is AI bias and fairness explained.

What is AI Bias?

Bias in AI happens when systems produce skewed or unfair outcomes, often due to flawed inputs or flawed design. Sources of bias include:

- Training data that reflect historical prejudices or underrepresent certain groups. Details here.

- Algorithmic amplification, where AI exaggerates existing patterns of inequality.

- Human decisions during AI design or deployment that introduce or overlook unfairness.

What is Fairness?

Fairness means treating all individuals equitably, without unjust favoritism or discrimination. It requires AI to avoid bias and provide equal opportunities and outcomes across diverse populations.

Why Does AI Bias Matter?

Biased AI doesn’t just produce unfair results; it can deeply harm society by:

- Perpetuating discrimination in key areas like lending, hiring, healthcare, and law enforcement. Learn more.

- Disproportionately impacting marginalized communities, worsening social inequalities.

- Leading to public distrust and legal issues for organizations that deploy biased AI.

Understanding and addressing ethical issues in AI development, especially around bias and fairness, is essential for creating just and sustainable AI technologies. Explore further.

Sources:

– SAP: AI Bias and Fairness

– Transcend: AI Bias Explained

– DataCamp: AI Bias

– IMD: AI Ethics and Bias

– PubMed: AI Bias Impacts

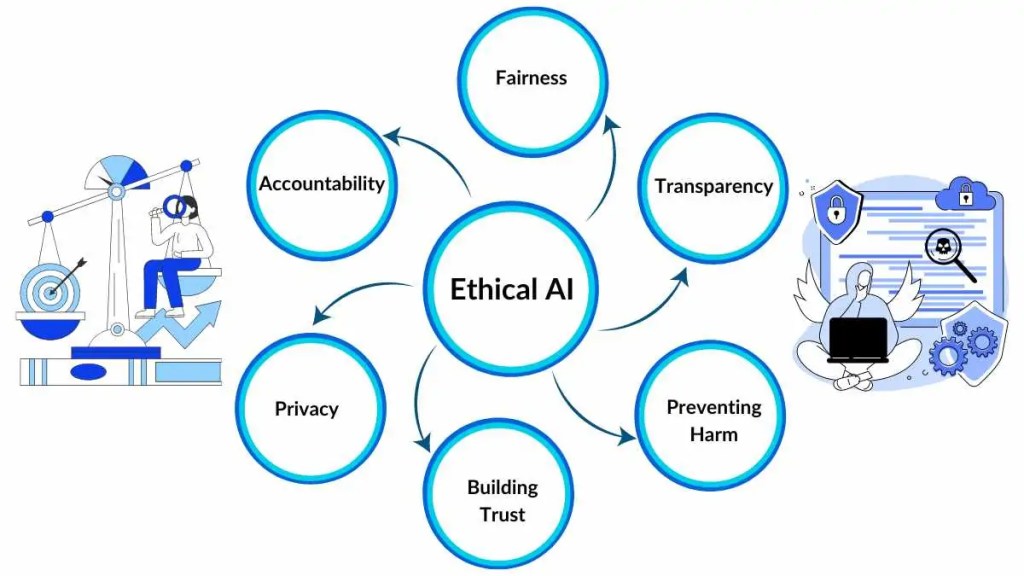

Responsible AI Principles

To counter these challenges, the AI community has embraced responsible AI principles—ethical guidelines derived from worldwide standards including UNESCO and leaders in the tech industry.

These five core principles help create trustworthy and fair AI systems:

1. Fairness

AI systems must avoid bias and discrimination. Fairness ensures:

- Equitable treatment of all people, regardless of gender, race, or background.

- Equal access to opportunities and fair evaluation.

2. Transparency

AI processes and decisions should be clear and explainable. Transparency means:

- Users and auditors can understand how AI makes decisions.

- AI systems are not “black boxes” but offer insights into their reasoning. Learn more.

3. Accountability

Humans are ultimately responsible for AI actions. This includes:

- Clear governance structures, such as ethics committees or oversight boards.

- Mechanisms to address harms and ensure compliance. Read details.

4. Privacy

AI must protect individuals’ data and respect consent. Privacy measures include:

- Robust data protection protocols.

- Transparency about data collection and use. Find out more.

5. Safety

AI systems should prioritize human wellbeing and avoid harm. Safety involves:

- Designing systems that prevent physical, psychological, or societal damage.

- Ongoing monitoring to catch and mitigate risks.

Applying these principles throughout the AI lifecycle helps prevent ethical issues and promotes socially beneficial technologies.

Sources:

– SAP: Responsible AI

– UNESCO AI Ethics Recommendation

– IMD: AI Ethics Principles

– IBM: AI Ethics

– Transcend: AI Ethics

– PubMed: AI Safety

AI Transparency and Accountability

Building trust in AI requires strong AI transparency and accountability.

Transparency: Making AI Understandable

Transparency can be achieved through:

- Explainable AI (XAI) techniques, such as:

- Feature importance scoring that shows which data points influence decisions.

- Decision trees or interpretable models that users can follow logically.

- Model interpretability tools offering insights into complex algorithms.

- Audit trails that document AI decision-making paths for review and compliance checks.

- Human-in-the-loop systems, where human monitors guide or override AI when needed.

Accountability: Owning AI Actions

Accountability means clearly assigning responsibility for AI outcomes to:

- Developers and data scientists who build the system

- Corporations deploying AI products

- Regulators enforcing ethical standards

Institutions can form ethics committees or AI governance boards to oversee compliance, provide guidance, and respond to failures or harms. Learn more.

These mechanisms encourage responsible use of AI and reinforce public confidence.

Sources:

– IMD: AI Transparency

– SAP: AI Accountability

– Transcend: AI Transparency

How to Build Ethical AI: Practical Steps

Knowing how to build ethical AI is key for developers, businesses, and policymakers to ensure AI benefits everyone.

Follow this structured framework:

1. Incorporate Ethical Principles Early

Embed responsible AI principles like fairness, transparency, privacy, and accountability right from the design phase. Don’t treat ethics as an afterthought.

Starting early helps prevent costly redesigns and harmful outcomes.

2. Audit for Bias Systematically

- Use diverse datasets that represent multiple demographics to avoid underrepresentation.

- Apply statistical fairness metrics to detect and measure bias regularly.

- Set up continuous monitoring to catch emergent bias post-deployment. Tools and resources.

3. Engage Diverse Stakeholders

Include voices from:

- AI developers and data scientists

- Ethicists and social scientists

- Business leaders and users

- Regulators and policymakers

Diverse perspectives improve ethical insight and help spot risks others might miss. Learn more.

4. Implement Ongoing Monitoring and Human Oversight

- Continuously track AI behavior and performance after deployment.

- Regularly update models to reduce bias or errors.

- Maintain governance structures able to respond to new ethical challenges.

5. Learn from Real-World Examples

- SAP’s use of ethics committees demonstrates strong corporate accountability.

- Microsoft’s responsible AI principles provide a practical model for embedding ethics at scale.

These steps foster ethical AI systems that deliver value while minimizing risks.

Sources:

– IMD: Building Ethical AI

– Transcend: Ethical AI Practices

– SAP: Bias Audits

– IBM: AI Ethics

Conclusion: Embracing What is AI Ethics for a Better Future

To summarize, what is AI ethics is a vital framework balancing the vast promise of AI with its potential risks. Ethical AI reduces harms like bias, discrimination, privacy violations, and societal injustice, while promoting trust, fairness, and inclusion.

As AI continues to evolve and permeate every part of life, sustained attention to responsible AI principles is essential. Only through ongoing commitment can we prevent misuse and build AI that benefits all. Explore the future.

Everyone—developers, businesses, regulators, and users—has a role to play. By conducting bias audits, fostering diverse teams, and establishing ethical oversight, we can collectively build trustworthy AI systems.

Let’s commit now to how to build ethical AI that serves society’s best interests.

Sources:

– IBM: AI Ethics Summary

– DataCamp: AI Ethics Overview

– PubMed: AI Ethics Future

– Ethics Unwrapped: AI Ethics

Frequently Asked Questions

What is AI ethics?

AI ethics refers to the set of moral principles guiding AI design, use, and impact to ensure technologies align with fairness, privacy, and harm prevention.

Why is AI bias a problem?

Bias can lead to unfair treatment of individuals or groups, perpetuate discrimination, and damage trust in AI systems.

How can AI transparency be improved?

By applying explainable AI methods, maintaining audit trails, and involving human oversight to clarify AI decision-making processes.

What are responsible AI principles?

They are ethical guidelines including fairness, transparency, accountability, privacy, and safety that help build trustworthy AI.

How to build ethical AI?

Incorporate ethical principles early, audit regularly for bias, engage diverse stakeholders, maintain oversight, and learn from best practices.

If you want to dive deeper into any topic mentioned here, please check the linked sources for comprehensive insights.